Making a Language Server Protocol Server's Client

Yes, you're reading that correctly. I'm not writing a generic LSP Client, I'm writing one tailor-made to test the LSP Server I'm writing. So, an LSP Server's Client. Now, if I wanted to be pedantic about it, I believe Microsoft typically refers to them as "Language Servers" - but in day-to-day usage I colloquially see and hear them referred to as LSP Servers (even in the Neovim docs). I'll use both to keep it fresh.

Anyways, I'm working on a Language Server for an upcoming project and while fiddling around to see if the Server was setup correctly, I ran into an interesting question... How the hell am I supposed to test this thing? I looked up some prior art and a surprising number of projects are manually testing their LSP functionality in VSCode (or whatever other IDE), or just assuming that if stuff breaks, users will notify them. I think I understand the reasoning, as to fully test an LSP, you'd need to write a custom Client that also fully exercises the Server.

For example, in Github issues for the the Helix editor (here and here):

On balance I don't think LSP integration tests are worth their added weight and I would prefer we test LSP features manually.

Writing a custom language server just for testing though I don't think adds enough value to justify its weight. I expect that it wouldn't be a trivial amount of code at all and that it would rarely catch places where we violate the spec or behave improperly.

I absolutely get where they're coming from. They have an LSP Client built into the editor and the goal would be a custom LSP Server for testing that their Client works correctly. To the commenter's point, to catch spec failures would probably require a ton of code to exercise all of those workflows.

However, I fundamentally don't agree with "testing LSP features manually". This is one of those cases where you could pick a reliable and stable Language Server (i.e. pyright or Rust Analyzer) and run a smoke test. There are a handful of simple things that could break, which could just as easily be caught very early in the development cycle - rather than waiting for a release, followed by "oh this broke for some reason".

Even better, you could create a suite of replay tests - where you just capture communication between the Server and Client - and feed some of the data back into either the Client or Server. My guess is that a few monolithic tests would capture a majority of the functionality, with a pretty small comparative effort.

In any case, I'm not using that project, nor am I supporting it with my time or money - so what I think about how they develop is mostly meaningless. But it was an example of a more pervasive observation, which is favouring manual testing over automated testing for LSPs.

Not Just LSPs

I also noticed this while writing my Tree-sitter grammar. Some grammars have an incredible amount of testing (looking at you Alex), where I would have faith that I could make changes and not worry about breaking anything - while most grammars have approximately 0 automated tests. On top of that, I think a lot of the existing grammars are missing performance/state checks - but that's a separate (and harder to resolve) issue that I'll come back to some day.

I'll admit that this is a mentality I don't really grok. Maybe it's because I jump around projects a lot, or come back to them sporadically, but I like to know that a project still works before I start hacking on it again. I also don't want to try to remember or follow all the test steps myself. Once my Tree-sitter grammar is "good enough", there will probably be at least 6 months before I look at it again to make a language update or bug fix. Do I really need to observe a large file and hope that my eyes and my highlighting theme makes it easy for me to see if I broke something? No thank you.

Ditto for this LSP. Once it's written, I'll infrequently touch the code, but what if one of those changes is a dependency update that silently breaks something? I have to build it, run it, and hope that I run into the failure case from a specification that's over 60,000 words (with example code)? More specifically, there are 39 capability identifiers right now, and while I'm only implementing a small subset of that, my day-to-day workflow might only exercise an even smaller subset. This feels like a tractable problem.

What Can We Do Here?

With all that out of the way, what does that mean for my Language Server? It's early days, but even going through the process of verifying that my LSP was running and initialized correctly was a pain when I had to go to another tool to reload it after every change. I'm not a huge fan of test-driven development as I prefer writing the thing, maybe re-writing it again, and then writing tests after-the-fact. But I do know when it has value - and this is one of those times.

What Do I Need?

Reading through the LSP specification overview and the lifecycle messages:

A Language Server runs as a separate process and development tools communicate with the Server using the language protocol over JSON-RPC.

The current protocol specification defines that the lifecycle of a server is managed by the client (e.g. a tool like VS Code or Emacs). It is up to the client to decide when to start (process-wise) and when to shutdown a server.

So, for a reasonable integration test, I need to:

- Run my Language Server in a separate process

- Have my Test Client drive the interaction

For the purpose of testing, this is very reasonable. If the interactions were driven from the Server, that would be slightly more painful - as then the test vs Server launch times might actually matter a bit more. In this direction where the Client controls the flow, it's pretty trivial to schedule.

Another non-goal of this project is 100% coverage for every possible input/output permutation and edge case (ie. a 4 GB file with no line breaks). The goal is that I can reliably test that a reasonable LSP Client:

- initializes correctly

- receives the capabilities I advertise

- can perform a simple interaction with each capability (sanity check)

- everything shuts down correctly.

Since the workflow is a request/response style RPC driven by the Client, it shouldn't be that much harder to test than a (somewhat stateful) function call once some basic test structures are in place.

What Language Am I Using for the Server?

I'm going to write it in a memory unsafe language, then shake my head and rewrite it in Rust, obviously.

No, but seriously... I will be writing it in Rust. While evangelists might hate me for saying this, Rust isn't the answer to the ultimate question of life, the universe, and everything. It won't even be in 7.5 million years when Rust v42 comes out.

This, however, is a great use case for Rust. I want a long-running, cross-platform, isolated binary that's easy to distribute (and update infrequently) to users on who-knows-what machine. There's going to be a large number of back-and-forth transactions (of many data types) that require sometimes complex transformations of transactional data. I'm tightly adhering to a pre-existing, thorough specification without much "creative exploration". I want the transactions to be as fast and predictable as possible and the incoming data will have a wide variety of content sizes and (in the worst-cases) I might need to have thread or process pools chewing through data. I'm willing to spend extra time during development to make sure everything is rock solid.

I can't really think of what language would be better than Rust given those factors. A built-in, per-request, arena allocator would be nice to have though to really keep memory in check.

This decision comes with a couple of perks too. As I mentioned earlier, I think Rust Analyzer is a great example of an LSP from a stability and functionality point-of-view (it is, so far, the only LSP that hasn't failed on me at runtime this year). The team maintaining it has done a great open-source courtesy by splitting off a skeleton of the LSP Server which now just requires setup, types (handled currently by lsp-types, but eventually generated by lsprotocol from the specification), and lets you focus on the language-specific implementation work - which is the real value of your LSP, not the standardized boilerplate:

This crate handles protocol handshaking and parsing messages, while you control the message dispatch loop yourself.

What Language am I Using for the Test Client?

Not Rust, that's for sure...

I mean, I could, and it would be cool to share some code or dependencies between the Server and Client I guess... But, there's really no need to. None of the requirements that made Rust a great choice for the Server make it a good choice for the Client. The Client will be running tests, and I'll be adding, removing, changing data, and just coming up with whatever I can to faithfully test the Server and then faithfully break the Server. The iteration cycle on the cycle and tests will be pretty fast during development, to the point that I wouldn't even want to compile the tests.

In fact, until I start getting into sanity testing capabilities, I won't even be using LSP types, I'll just be replaying lifecycle strings that I get from Neovim and VSCode when they communicate with other LSPs:

{"jsonrpc":"2.0","id":1,"method":"initialize","params":{"capabilities":{}}}

{"jsonrpc":"2.0","method":"initialized","params":{}}

...

{"jsonrpc":"2.0","id":42,"method":"shutdown","params":null}

{"jsonrpc":"2.0","method":"exit","params":null}Maybe once my LSP is stable, I'll port the tests to Rust and use proper typing - but the value

of that would almost exclusively be to be able to run cargo test only, rather than cargo test followed by pytest... And I mean... I guess that's

something?

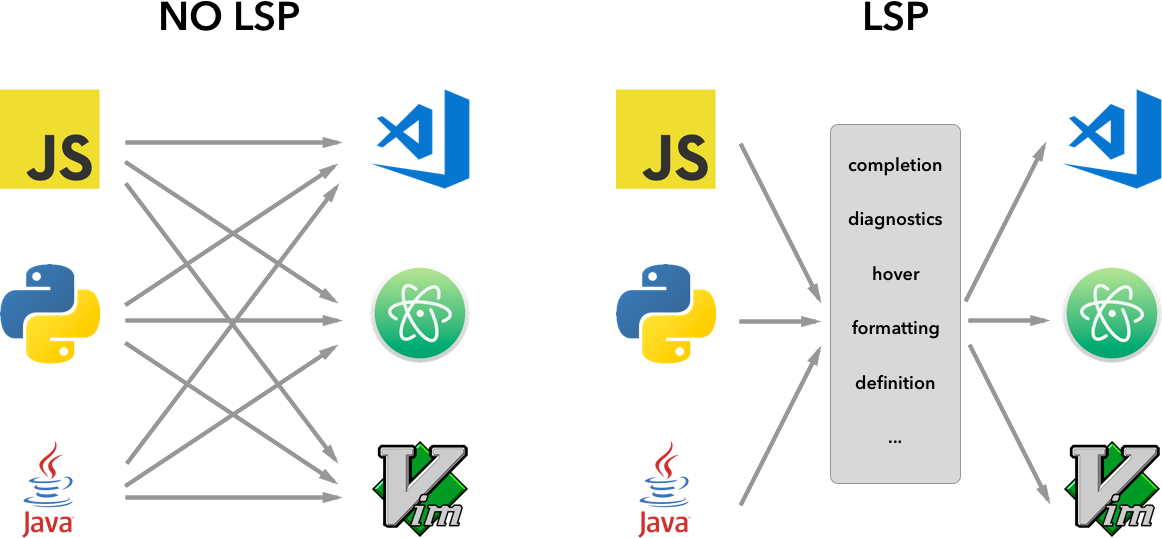

This is one of the really cool features of an LSP actually. That I can write the Client in any

language, I can write the Server in any language - and they communicate using JSON-RPC through one of several transport channels (stdio, pipe, socket, or

nodejs-specific stuff). From my quick research, stdio seems to be the de facto

default - and it looks like if I want to support anything else, I would need to add the ability

to start the Server with command line arguments. It would be a nice addition, but stdio seems to work perfectly fine at the moment.

Other Language Clients

I'll be starting with a Python test Client, and as I mentioned, maybe I'll get up the ambition some day to port that to Rust if I find value. Because Microsoft and VSCode area a big driver for the Language Server Protocol, the NodeJS ecosystem has a lot of first party support that just isn't there in other languages:

vscode-languageclient

vscode-languageserver

vscode-languageserver-textdocument

vscode-languageserver-protocol

vscode-languageserver-types

vscode-jsonrpcAs I'm trying to write LESS Javascript and Typescript, rather than more - I'll be skipping this one.

However, as always, I'm wondering what a Jai Client might look like. The C-bindings generator is fantastic, so if there are C LSP types anywhere to save me the hassle of writing them, this would be a pretty fast Client too. However, this is less of a real-world use case and more about learning the language better and coming up with a set of tooling that I'm comfortable with.

First Steps

My first steps in building my first LSP Server are already done, in that I've stood up a minimal

Server application that accepts communication over stdio and I tested this using

named pipes in two terminals. The next step is to create my test Client and test scaffold to

avoid using VSCode or Neovim during the preliminary LSP development phases.